We recently caught up with Avi Bhatnagar, Senior Director of Digital Strategy at WhiteHat Security, to learn about how he increased website traffic and leads using the BrightEdge Smart Content framework. Avi oversees several marketing channels and often comes up with a new approach for one program that he later reapplies elsewhere. The interview below covers his recent experiences.

Smart Content framework interview

BrightEdge: Avi, what does WhiteHat Security do?

Avi Bhatnagar: WhiteHat Security has been in the business of securing applications for over 15 years. It offers a cloud service that allows organizations to bridge the gap between Security and Developers. We are the leading application security provider that combines the best of technology and human intelligence to ensure a safe digital life for our customers.

BE: You’ve been focusing on brand awareness and top-of-the-funnel traffic lately. Tell us about that.

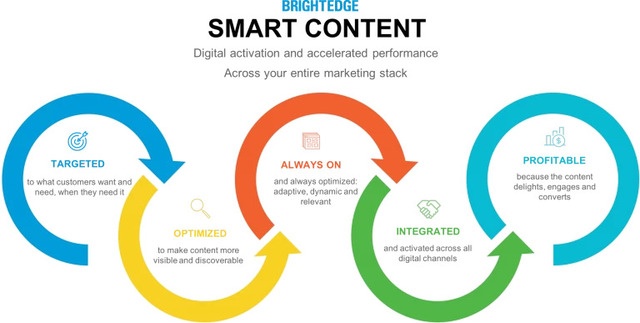

AB: Yes, as we all know, the last few years have seen an enormous increase in data breaches, malware attacks, and other security nightmares. Just few months ago, there were several high profile data breaches reported, including the news about Equifax. It became a priority to educate security teams and developers about the importance of security best practices in the application development process, as opposed to treating security as an afterthought. Increasing brand awareness through valuable content becomes an apparent opportunity. Therefore, we developed a Knowledge Center glossary for our audiences to leverage as a source for Quick Answers. We leveraged a framework called Smart Content to ensure that those new website pages would be optimized for search engines and accessed by lots of people much faster than how traditional content performs.

BE: How did you apply the Smart Content framework?

AB: Traditional content development starts with an ideation process around the topics that are aligned with our integrated marketing campaigns. It’s a very inside-out approach to content development: The story will be based on a particular topic, so the persona is limited to a specific target audience. There’s always a challenge to market this content to a niche persona. Smart Content takes an outside-in approach. It evaluates the marketplace of ideas: what questions prospects and customers are asking, and how well brands and their competitors are addressing those questions with quality content. We took a comprehensive approach by partnering with our Engineering teams to define topics that were outside of our product taxonomy. We looked at specific questions that our target audience raised around security testing tools, application vulnerabilities to specific threats and attacks. We leveraged the results from our Stats Reports and we developed glossary entries specifically for these topics. More and more brands are coming to realize that search engines are a great way of reaching out to new audiences and establishing wider brand awareness. In traditional content development, a writer creates a piece of content, publishes it, and then a search engine optimization (SEO) professional needs to optimize the content to make it more visible in search. Smart Content flips this approach on its head: it injects all the SEO and mobile-friendliness wisdom into the content right in the writing process, which helps ensure that the content is optimized for SEO at the get-go. Smart Content makes it easy for search engines to understand the content, and so the content ranks high on search result pages.

BE: There’s almost parallelism between Smart Content that bakes SEO into the content writing process, and WhiteHat Security that bakes security practices into application development process.

AB: It’s almost poetic, isn’t it? Yeah, maybe that’s why I got so drawn to Smart Content.

BE: You were able to increase website leads too, which is a primary business metric for you guys.

AB: Yes, we increased our lead conversions by 150% year-over-year by implementing this type of Smart Content. Again, in traditional content development, the messaging is focused on writing the value statements, but risks neglecting content performance. The Subject Matter Experts (SMEs) write to explain things, but often times pay little or no attention to the marketing funnel or customer journey. Smart Content focuses on content performance: from prioritizing content topics that have strong demand, yet weak competition, to driving relevant internal links that will help bots and humans find the content and take the desired CTA (Call to Action). Performance is key.

BE: So what happened next?

AB: The glossary took off instantaneously, and started to generate almost 10% of our overall traffic within months. With Smart Content, we have increased our organic search traffic by 60% year-over-year. I was amazed by how fast the content performed on the SERPs. So I developed a hypothesis that maybe I could increase my SEM efficiency also, if tied my paid search ads to the Smart Content pages.

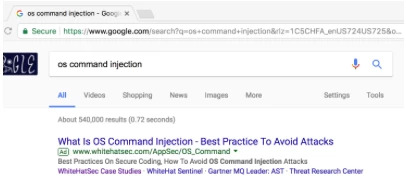

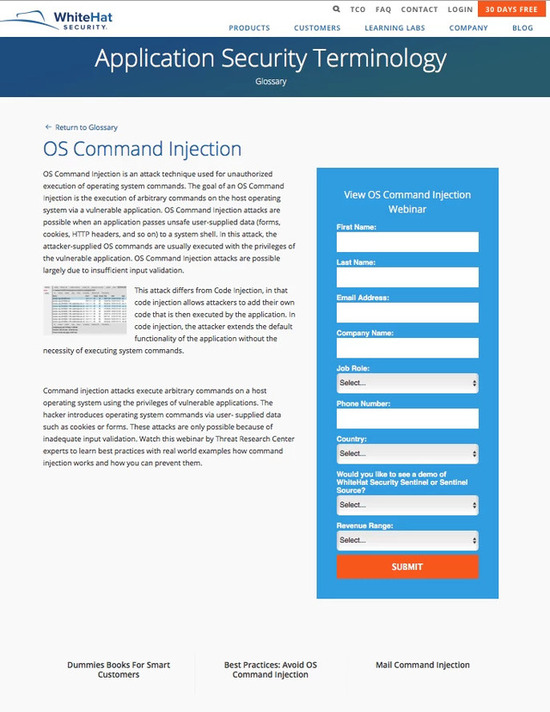

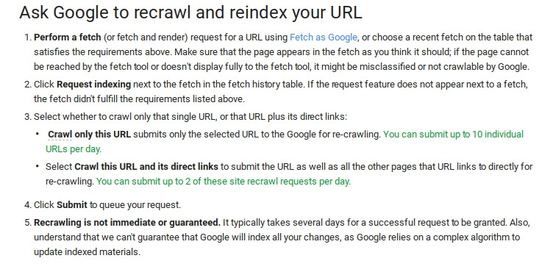

I ran an experiment for Integrated Search - SEO + SEM alignment. I placed bids on some of my glossary terms and set my Smart Content pages as the landing pages in my SEM ads. Below is an example for the term "os command injection," for which we rank on position six organically.

My destination URL is the Smart Content glossary page for this term:  The content is well-tuned for SEO and has a clear CTA directly related to the topic. I also included dynamic content recommendations at the bottom of the page to keep non-converting readers from abandoning the site.

The content is well-tuned for SEO and has a clear CTA directly related to the topic. I also included dynamic content recommendations at the bottom of the page to keep non-converting readers from abandoning the site.

Using Smart Content to power paid search campaigns has proven fruitful: because the pages were so tuned, the Quality Scores increased from a site average of 3.4 to 6.1. So I was able to maintain my ad positions while reducing Cost-Per-Click by about 15%.

When I compared the Smart Content SEM campaigns with the traditional SEM campaigns, Click-Through-Rate increased by 55%, and Form Submissions - our measure for lead generation activities - increased by a substantial 125%.

BE: What was the reaction when people saw the results?

AB: The extended team was ecstatic to have such positive results and see the true value of investing in organic and paid search together. I used the results to make a case for - and was able to secure - additional SEM budget. I will increase the spend on paid search ads that will drive relevant new visitors to my Smart Content pages.

BE: What’s the key lesson from all of this?

AB: Marketing organizations need to realize creating content to tell a story isn’t enough. The new mindset to adopt is "if you optimize it, they will come." It should function more like a connective tissue between what customers are looking for and the actions that the brands ultimately want them to take.

Content development must be pragmatic: It should focus on precise interests and intents that your target audiences care about the most; some of which may seem to be outside of your product terminology, blog topics, or typical taxonomy. While it’s important to provide something interesting, useful or shareable, branded content should also clearly lead readers to conversion points, so that they don’t have to guess what to do next.

This helps to continuously build your website into a marketing engine.

BE: Thanks for sharing your experiences with us.

AB: My pleasure. And if anyone wants to learn more, feel free to DM me on Twitter: @avibay.

For many marketers, this change will not cause too much concern or change to their

For many marketers, this change will not cause too much concern or change to their