monique.johnson

M

Posted 4 years 1 month ago

t

9 min read

Updated: February 2022

Long-tail keywords account for over 90% of search queries.

Accessing that traffic pool can be incredibly profitable. The good news is that identifying, targeting and ranking for long-tail keywords is a straightforward and cost-effective process.

In this post, we’ll clear up some common misconceptions about long-tail keywords, cover the benefits of having a dedicated long-tail strategy, and describe how to conduct thorough, extensive keyword research.

Where Does the Idea of the “Long Tail” Come From?

The concept of the “long tail” has been instrumental in shaping how organizations understand markets. Chris Anderson popularized the concept in his 2004 book The Long Tail: Why the Future of Business is Selling Less of More but statisticians have been studying it in various forms since at least the 1950s.

In his now-famous article on Wired and his subsequent book, Chris Anderson argued that there is more profit to be made by selling lots of different products, each with low demand and few competitors, than by attempting to create one big hit that relies on leveraging unified demand in a crowded space. The advent of democratized marketplaces with low barriers to entry - just like the good ol’ internet - has made this approach possible.

Applied to search engine optimization, the idea is simple: target lots of low-volume, low-competition keywords instead of wastefully expending resources on highly competitive, high-volume counterparts.

Now, while that strategy looks uncomplicated on paper, it’s a little more multifaceted in practice. So let’s dig into the specifics.

What Are Long-Tail Keywords?

There is no shortage of definitions of the term “long-tail keyword” on the web. But despite its importance and uniqueness, search engine experts still get things wrong when describing the concept.

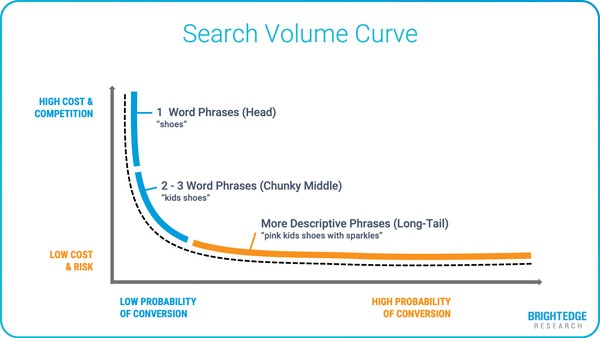

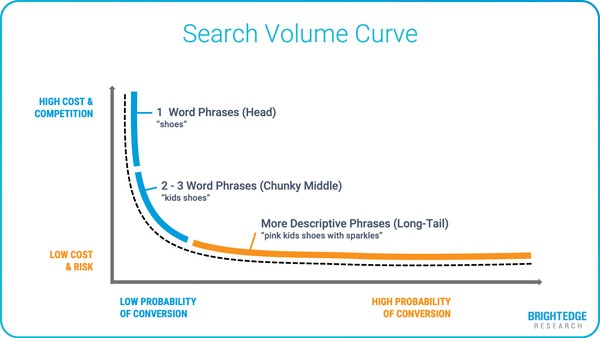

Long-tail keywords are search queries that have relatively low search volumes compared to high-volume “head keywords.” You can understand this idea in terms of specific, thematically related groups of keywords, say around the topic of “home improvement,” or as applied to the totality of Google search queries over a given time (or any other search engine).

Low-volume queries sit on the “tail” of a curve of a graph that maps search volumes on the y-axis against keywords on the x-axis. If you could see the whole graph, the long-tail would stretch for miles.

High-volume keywords comprise what is called the “head.” Middle-volume keywords are sometimes said to constitute the “thorax” or “chunky middle.”

Misconceptions About Long-Tail Keywords

To say that long-tail keywords are phrases of multiple words with search volumes of ten or less isn’t entirely accurate, although this is often the case. There are many single-word long-tail keywords. What’s more, the term “low” has to be understood relatively for the concept of the long tail to make sense.

Another common mistake people make is to define long-tail keywords as always being highly specific. While this is usually true, there are exceptions. For example, the low-volume keyword “bog snorkeling” (yep, it’s a recognized sport) is just as semantically general as “golf.”

The key takeaway here is that long-tail keywords should be understood primarily in terms of volume (or number of monthly search volume). Applying other attributes just serves to needlessly muddy the waters and is usually unhelpful from a marketing perspective.

Why Are Long-Tail Keywords Important?

Long-tail keywords are important because they are effective at driving traffic. A well-executed long-tail keyword strategy can result in significant amounts of new visitors and high value leads.

Here’s a quick rundown of the main reasons that long-tail keywords are worth your attention:

1. Long-Tail Keywords Have Low Competition

Long-tail keywords tend to be less competitive from a search perspective than high-volume keywords. As such, they are easier to rank for.

This is due to a mix of reasons. First, many companies focus exclusively on high-volume terms, leaving long-tail keywords wide open. Moreover, the sheer number of long-tail keywords means that competitor activity is more widely distributed.

2. Long-Tail Keywords Are Easy to Target From a Practical Perspective

Creating content for long-tail keywords is a relatively straightforward process. Specific terms typically only require short and precise explanations. For example, a query like “radiators” easily lends itself to an article of thousands of words of content, with numerous sub-sections. A term like “where to buy cheap radiators in Honolulu,” on the other hand, can be targeted with a comparatively brief piece of text.

It’s also possible to target numerous long-tail keywords within a single webpage or piece of content. Using long-tail keywords to structure content will enable your website to rank for terms that might otherwise have been missed. Additionally, there is little cost required to optimize longer-form content for long-tail traffic.

3. Long-Tail Keywords Have High Conversion Rates

Consider the difference between the keywords “water bottle” and “two-liter blue water bottle with a folding cap.” The second one carries a highly specific intent. As a result, the searcher responsible for tabbing it into Google is more likely to follow up by purchasing a product related to the keyword.

Because long-tail keywords are usually very precise, companies can tailor highly-targeted offers and opt-in incentives to capture site visitors.

4. The Pool of Long-Tail Keywords Is Large

There are billions of long-tail keywords. You can’t see it on a typical graph because it has to be truncated for practical reasons, but the long tail goes on for miles. If you’re in a well-known industry, it will be practically impossible to run ou

t of keywords. And even niche organizations will have their work cut out for them in attempting to capture even a portion of all available long-tail traffic.

How to Find and Use Long Tail Keywords: A 5-Step Guide

Here are five general steps that can help form the basis of your long-tail keyword strategy:

1. Create Buyer Personas and Identify Broad Keyword Topics

Before you dig deeper and pinpoint specific terms, you need to identify the broad topics you will be researching. This definition will normally be in terms of generic keywords. Having clearly-defined parameters will enable you to stay on track during the later stages of this process.

In particular, you should ask two questions:

- Who is your target audience?

- What topics are they interested in?

Keep in mind that your answers will likely be different depending on which part of the customer journey you’re considering. Profiles for first-time searchers will be different to those of returning visitors, and your long-tail keyword targeting should account for this.

2. Use a Keyword Research Tool Like Data Cube

Once you’ve identified “tier one” terms, enter them into a research solution like BrightEdge’s Data Cube. Data Cube has dedicated functionality for discovering long-tail keywords. You can use it to sort potential target queries by volume, competition, potential value, and more.

While it’s not unusual for SEOs to use ancillary tools and apps, particularly those that specialize in semantic keyword and long-tail query generation, it is good practice to leverage one high-quality solution as the basis for finding and organizing long-tail keywords. In this way, your workflow will have a central, easily accessible hub.

3. Evaluate Competitors

Competitor tracking is another effective way of identifying profitable long-tail keywords. The Share of Voice functionality within the BrightEdge platform allows you to uncover long-tail terms for which other sites are ranking.

Many of your competitors’ search results will not be the outcome of actively targeting a particular long-tail keyword. Often, existing content will be ranking “accidentally.” This presents you with an opportunity to create content of a greater quality and achieve better results.

4. Collect Questions From Community Spaces

Analyzing user-generated content on sites like Reddit, Quora, Facebook, Amazon and topical forums can give you a range of insights into the ways potential customers are talking about their interests and problems.

Trawling through forums and discussion boards is a time-consuming process, and you will still need to run gathered terms through your software. That said, you can find lots of hidden gems this way and, depending on their value to your company, it may be worthwhile as a long-term approach.

5. Organize and Rank Long-Tail Keywords

Once you’ve collected a set of “raw” keywords that show promise, you should organize them into a coherent structure that can act as a guide for creating content. Metrics to consider when undertaking this process include value, relevancy, competition and, of course, volume.

You should also account for the following semantic distinctions:

- Synonyms - The terms “how to get over the January blues,” “feeling down in January,” and “tips for beating January blues” are synonymous. They all mean the same thing. Google is smart enough to recognize this. Rather than creating individual pages for each one, you should target synonyms in the same piece of content.

- Primary terms - These terms will act as the main subjects of individual pieces of content. Some primary terms, like “how to dye curly hair naturally” or “how to revive a dying spider plant,” will be obvious in the sense that they cover quite a lot of ground. Others may look like secondary terms but actually warrant their own page. “How to dye curly hair naturally for women” could be added as a subtopic to an article about dying hair, for example, but will probably be targeted more effectively individually.

- Secondary terms - Secondary terms should constitute part of a larger piece of content. One of the best ways to decide whether or not to designate a term as a primary or secondary keyword is by checking existing results and seeing whether Google is ranking dedicated pages or ones covering a broader topic.

Organizing keywords semantically and topically isn’t an exact science. The decision of whether to create a new piece of content or update an existing one will often come down to personal judgment.

Conclusion: What’s Next?

So you’ve done your research and built a jam-packed list of long-tail keywords. What’s next?

Well, it’s time to start creating content. A long-tail strategy is an invaluable business asset. But it’s nothing without a well-developed content plan.

SEOs that can target high-value, low-competition keywords can guarantee a steady stream of website visitors and leads. Dedicating time and resources to ongoing research will pay dividends well into the future.