When AI Goes Negative in Healthcare: The Safety Signals That Trigger Brand Criticism in YMYL Search

BrightEdge data reveals that AI treats healthcare brands very differently depending on their category — and negative sentiment, while rare, follows predictable safety-driven patterns that consumer health brands need to understand.

BrightEdge data reveals that AI treats healthcare brands very differently depending on their category — and negative sentiment, while rare, follows predictable safety-driven patterns that consumer health brands need to understand.

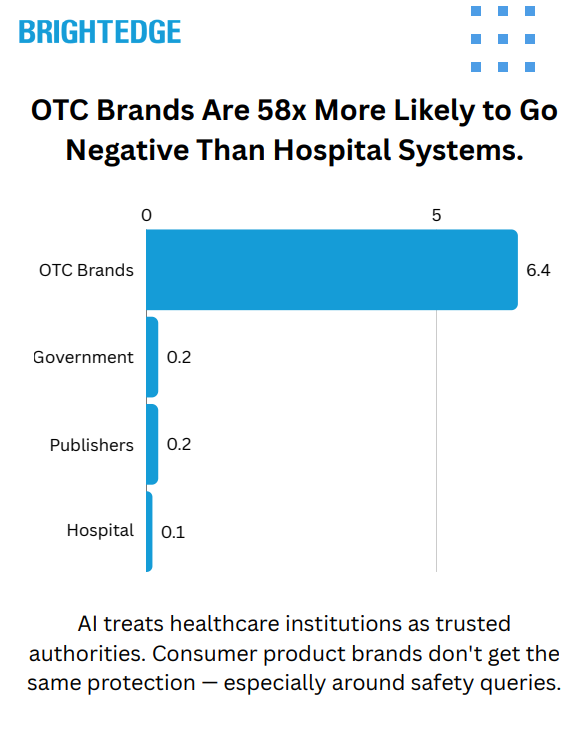

BrightEdge data reveals that when AI engines mention healthcare brands negatively, it's almost never random — it's driven by safety signals. And the gap between how AI treats different types of healthcare sources is dramatic: OTC and pharmaceutical brands are 58x more likely to receive negative sentiment than hospital systems.

Healthcare is the highest-stakes category in AI search. Both Google AI Overviews and ChatGPT treat it as YMYL (Your Money or Your Life) content, applying extra scrutiny to the sources they cite and the claims they surface. AI Overviews now appear on approximately 88% of tracked healthcare queries, and ChatGPT generates an AI response for every query it receives. Both platforms are actively shaping how consumers understand health brands, medications, and institutions at scale.

But YMYL caution doesn't mean brand safety. When AI surfaces contraindications, safety warnings, or adverse effect data, it names specific products — and that creates a sentiment exposure that many healthcare and pharma marketers aren't yet tracking.

So we used BrightEdge AI Catalyst™ to find out what triggers negative sentiment in healthcare AI, who's most at risk, and where Google draws the line on which health topics get an AI-generated answer at all.

The short answer: AI treats healthcare institutions as trusted authorities. Consumer product brands don't get the same protection — especially on safety-related queries.

Data Collected

Using BrightEdge AI Catalyst™, we analyzed:

| Data Point | Description |

| Brand sentiment in AI responses | Every brand mention classified as positive, neutral, or negative across both Google AI Overviews and ChatGPT in healthcare queries |

| Citation patterns | Which source types each platform cites for healthcare queries and how citation concentration differs |

| Brand mention visibility | Which healthcare domains are explicitly named in AI-generated responses |

| Sensitive topic analysis | How both platforms handle pregnancy, drug interaction, mental health, sexual health, pediatric, and substance use queries |

| AIO deployment rates | Which healthcare specialties and topic areas trigger AI Overviews — and which Google leaves to traditional organic results |

| Cross-platform comparison | Head-to-head sentiment and citation analysis on healthcare prompts appearing in both engines |

Key Finding

Negative brand sentiment in healthcare AI is rare — but it's structurally concentrated on consumer product brands, triggered almost exclusively by safety-related queries, and absent from institutional sources.

Across both engines, negative brand mentions represent a small share of total healthcare AI references — under 0.5% of all brand mentions carry negative sentiment. But that small percentage is not distributed evenly. OTC and pharmaceutical brands absorb negative sentiment at a rate of 6.4%, while hospital and health systems see just 0.1%. That's a 58x gap.

And the triggers are almost entirely safety-driven: pregnancy contraindications, drug interaction warnings, long-term risk disclosures, and dubious health claim flagging account for the majority of identifiable negative sentiment. AI isn't editorializing about healthcare brands — it's surfacing institutional safety warnings and attaching them to specific products.

The Trust Hierarchy: Not All Healthcare Brands Are Equal

Both platforms treat healthcare sources with a clear hierarchy, and the gap between the top and bottom is enormous.

| Source Category | Positive Rate | Neutral Rate | Negative Rate |

| Hospital / Health Systems | 63.6% | 36.3% | 0.1% |

| Health Publishers | 51.0% | 48.8% | 0.2% |

| Government Sources | 45.0% | 54.8% | 0.2% |

| OTC / Consumer Health Brands | 35.8% | 63.5% | 0.7% |

Hospital and health systems sit at the top of the trust hierarchy on both platforms. They're not just cited frequently — they're framed positively at a higher rate than any other category. At 63.6% positive sentiment in ChatGPT, hospital systems are the most "recommended" source type in healthcare AI.

Government sources skew more neutral — they're treated as informational authorities rather than explicitly endorsed. This reflects an interesting editorial distinction: AI trusts government sources for facts but reserves its strongest positive framing for hospital systems.

OTC and consumer health brands sit at the bottom on every metric. Lower positive rates, higher neutral rates, and a negative rate that dwarfs every other category. When we isolate just the negative rate, the disparity is stark:

| Source Category | Negative Sentiment Rate |

| OTC / Pharmaceutical Brands | 6.4% |

| Tabloid / Lifestyle Media | 3.4% |

| Health Publishers | 0.25% |

| Government / Medical Associations | 0.20% |

| Hospital / Health Systems | 0.11% |

OTC brands face 58x the negative sentiment rate of hospital systems. This isn't a small difference in degree — it's a structural feature of how AI evaluates different types of healthcare authority.

Platform Differences: ChatGPT Is More Opinionated

While both platforms show the same trust hierarchy, they differ in how strongly they express it:

| Metric | ChatGPT | Google AI Overviews |

| Overall positive rate | 45.1% | 33.5% |

| Overall neutral rate | 54.5% | 66.2% |

| Overall negative rate | 0.4% | 0.3% |

| Avg. brands mentioned per response | 5.8 | 3.8 |

| Top 10 domain citation share | 34.3% | 40.1% |

ChatGPT is more willing to take a position — both positive and negative. It frames sources more favorably, mentions more brands per response, and distributes citations more broadly. Google AI Overviews is more conservative: more neutral, fewer sources per response, and higher concentration on a smaller set of trusted domains.

For healthcare organizations, this creates a strategic split. ChatGPT offers more pathways to visibility (more brands cited, more broadly distributed), but also more editorial exposure. Google AI Overviews is harder to break into but more predictable once you're there.

One brand mention pattern is particularly striking. When we look at which brands are explicitly named in AI responses (not just linked, but mentioned by name), a single UK government health service captures 92.6% of all brand visibility in Google AI Overviews — and 68.1% in ChatGPT. Google's trust in government health authorities, when it comes to naming sources, is nearly monopolistic.

The 4 Safety Signals That Trigger Negative Sentiment

When AI does go negative on a healthcare brand, it follows predictable patterns. Nearly all identifiable negative sentiment traces back to safety-related queries — AI surfacing institutional warnings about specific products.

| Trigger Category | Share of Identifiable Negative Mentions |

| Drug Interactions / Dosing Concerns | 14% |

| Pregnancy / Maternal Safety | 13% |

| Quick-Fix / Dubious Health Claims | 7% |

| Long-Term Risk / Side Effects | 3% |

| Substance Effects | 2% |

1. Pregnancy and Maternal Safety

The single largest identifiable trigger. When users ask whether a medication or supplement is safe during pregnancy or breastfeeding, AI cites institutional contraindication guidance — and names the product negatively. Pain relievers, sleep aids, nasal sprays, and cold medications are the most frequent targets.

The pattern is consistent: AI references hospital systems and government health agencies as the authority, and the consumer product takes the negative sentiment hit. The institution providing the warning gets positive or neutral sentiment; the product being warned about gets the negative tag.

Example query patterns: "Can you take [pain reliever] while pregnant?" "What nose spray can I use while breastfeeding?" "What teas are safe during pregnancy?"

2. Drug Interaction and Dosing Concerns

The second major trigger. Queries about combining medications, appropriate dosing, or daily use safety generate negative mentions for the products in question. AI cites medical institutions and government agencies warning about overuse or interaction risks.

Sleep supplements are particularly exposed here — queries about how many to take, whether daily use is safe, and interaction with other medications consistently surface negative sentiment for the product brand while citing hospital systems positively.

Example query patterns: "How many [sleep supplement] gummies should I take?" "Is it OK to take [sleep aid] every night?" "What can I take for arthritis pain while on [blood thinner]?"

3. Long-Term Risk Disclosures

When users ask about the long-term effects of specific medications, AI surfaces published research linking products to adverse outcomes. Certain antihistamines appear negatively in connection with cognitive risk in elderly populations. Statins appear in blood sugar effect discussions. The AI is citing peer-reviewed research — but the brand absorbs the negative sentiment frame.

This is a particularly difficult exposure for pharmaceutical brands because the negativity is evidence-based. AI is accurately representing published research findings, but the brand association is what sticks in the AI-generated response.

Example query patterns: "Medications that increase risk of Alzheimer's" "Long-term use of [benzodiazepine] in the elderly" "Which statin does not raise blood sugar?"

4. Dubious Health Claims

A smaller but notable pattern: tabloid-style health publishers and lifestyle media occasionally receive negative sentiment when AI flags their claims as lacking evidence. Quick-fix health content — "lose belly fat in 1 week," "cure [condition] naturally in 7 days" — gets called out when AI notes the claims aren't supported by medical evidence.

This trigger is unique because it targets content sources rather than products. AI is functioning as a quality filter, distinguishing between evidence-based health information and sensationalized health content.

Example query patterns: "How to lose belly fat naturally in 1 week?" "How to get rid of [condition] fast?"

Where Google Won't Even Use AI: The AIO Deployment Gap

Beyond sentiment, there's a separate dimension of AI caution in healthcare: the topics where Google declines to generate an AI Overview at all, leaving the answer to traditional organic results.

AI Overviews appear on approximately 88% of healthcare queries overall, but the rate varies dramatically by specialty and topic area:

| Healthcare Topic | AIO Deployment Rate |

| Gastroenterology | 95.1% |

| Orthopedics | 94.1% |

| Neurology | 94.2% |

| Urology | 93.8% |

| Cardiology | 92.8% |

| Genetics | 89.3% |

| Primary Care | 68.7% |

| Telehealth | 66.7% |

| Eating Disorders | 65.1% |

| Bullying / Behavioral Health | 65.1% |

The pattern is clear: the more emotionally sensitive the health topic, the less likely Google is to deploy an AI-generated summary. Clinical specialties cluster between 93–95% AIO deployment. But eating disorders, bullying, and behavioral health drop to 65% — a 30-percentage-point gap.

Among the specific queries Google avoids answering with AI: domestic violence, emotional abuse, body dysmorphia treatment, binge eating disorder treatment, and substance abuse topics.

The ~12% of healthcare keywords without AI Overviews cluster into recognizable patterns:

| Non-AIO Pattern | Share of Excluded Keywords |

| Local / navigational queries | ~11% |

| Abuse and violence topics | ~9% |

| Visual / diagnostic queries | ~7% |

| Body image and eating disorders | ~7% |

| Branded / facility-specific | ~3% |

This deployment gap has direct implications for SEO strategy. For behavioral health topics, traditional organic rankings carry disproportionate weight because Google frequently isn't generating an AI summary to compete with. These are the queries where organic SEO still dominates the user experience.

Sensitive Topic Handling: Both Platforms Engage, Differently

Both platforms engage with sensitive healthcare topics at broadly similar rates — the difference is in how many sources they involve and how they frame the answers.

| Sensitive Topic | ChatGPT Query Share | Google AIO Query Share |

| Sexual Health / STIs | 3.5% | 3.1% |

| Pregnancy / Maternal Health | 2.4% | 2.4% |

| Drug Interactions | 2.2% | 3.2% |

| Mental Health | 1.4% | 2.2% |

| Substance Use / Addiction | 1.4% | 1.1% |

| Pediatric Health | 1.0% | 1.2% |

Google AI Overviews shows slightly higher engagement on drug interaction and mental health queries, while ChatGPT shows slightly higher engagement on sexual health and substance use topics. But the key structural difference is that ChatGPT includes 5.8 brands per response vs. 3.8 for Google — giving users more reference points and distributing trust across more organizations for every sensitive query.

Cross-referencing with AIO deployment data reveals the nuance: while Google does answer most sensitive health queries with an AI Overview, it draws clearer lines around abuse, violence, and eating disorder content — where AIO rates drop 30 points below the clinical specialty average.

What This Means for Your Healthcare Brand Strategy

OTC and Pharma Brands Carry the Most Exposure. At 6.4% negative sentiment — 58x the rate of hospital systems — consumer health brands are the primary target when AI surfaces healthcare criticism. This isn't random editorial judgment; it's AI faithfully reflecting what institutional sources say about specific products in safety contexts. The risk is concentrated and predictable.

Safety Queries Are the Trigger — and They're Identifiable. Pregnancy safety, drug interactions, long-term risk disclosures, and dubious health claims account for the majority of identifiable negative sentiment. Brands can map exactly which of their products sit in these query spaces and prioritize proactive safety content accordingly.

Own the Narrative Before AI Writes It for You. When AI goes negative on a consumer health product, it's citing someone else's warning — a hospital system, a government agency, a peer-reviewed study. The brand that publishes transparent, comprehensive safety guidance gives AI its own language to use. The brand that doesn't leaves the characterization to third parties.

Hospital Systems Are in the Strongest Position. With a 0.1% negative rate and 63.6% positive sentiment, hospital and health systems are the most trusted, most favorably framed source category in healthcare AI. The priority for these organizations isn't defending against negativity — it's ensuring they're in the citation set.

Behavioral Health Requires a Different Strategy. The 30-point AIO gap on eating disorders, bullying, and abuse content means traditional organic SEO carries outsized importance for behavioral health organizations. These topics also represent an opportunity in ChatGPT, which does answer these queries and cites broadly.

Monitor Both Platforms — They Tell Different Stories. ChatGPT is more opinionated (higher positive and negative rates) and cites more broadly (5.8 brands per response). Google AI Overviews is more conservative (more neutral) and more concentrated (top 10 domains capture 40.1% of citations). A brand's reputation in one platform may look completely different in the other.

Technical Methodology

| Parameter | Detail |

| Data Source | BrightEdge AI Catalyst™ |

| Engines Analyzed | Google AI Overviews, ChatGPT |

| Sentiment Classification | Brand-level sentiment (positive, neutral, negative) for every brand mentioned in healthcare AI responses |

| Citation Analysis | Domain-level citation tracking including visibility share, citation concentration, and source categorization |

| AIO Deployment Tracking | Separate multi-specialty healthcare keyword set tracking AI Overview presence/absence across clinical and general health categories |

| Topic Categories | Healthcare specialties (gastroenterology, orthopedics, neurology, urology, cardiology, genetics), general health (primary care, behavioral health, eating disorders, telehealth) |

| Sensitive Topic Classification | Pregnancy/maternal, drug interactions, mental health, sexual health/STIs, pediatric, substance use |

Key Takeaways

| Finding | Detail |

| 58x Negative Sentiment Gap | OTC/pharma brands face a 6.4% negative rate vs. 0.1% for hospital systems. AI structurally favors institutional healthcare sources over consumer product brands. |

| 4 Predictable Safety Triggers | Pregnancy safety, drug interactions, long-term risk disclosures, and dubious health claims drive the majority of identifiable negative sentiment. All are safety-signal driven. |

| Hospital Systems Are Most Trusted | 63.6% positive sentiment — the highest of any source category. AI treats hospital systems as the most recommended, most authoritative healthcare source. |

| Government Sources Dominate Brand Mentions | A single UK government health service captures 92.6% of Google AIO brand mentions and 68.1% of ChatGPT mentions — near-monopoly status. |

| 88% of Healthcare Queries Get AI Overviews | But behavioral health topics (eating disorders, bullying, abuse) drop to 65%. A 30-point gap where organic SEO still dominates. |

| ChatGPT Distributes Trust More Broadly | 5.8 brands per response vs. 3.8 for Google. Lower citation concentration. More pathways to visibility for a wider range of healthcare organizations. |

Download the Full Report

Download the full AI Search Report — When AI Goes Negative in Healthcare: The Safety Signals That Trigger Brand Criticism in YMYL Search

Click the button above to download the full report in PDF format.

Published on February 26, 2026